Introduction

Regulars of With a Terrible Fate know that Nier is near and dear to my heart because it was the game that first motivated me to write analytically about video games, even before my work on Majora’s Mask. You can therefore imagine how excited I was when the game was given a sequel, NieR: Automata. While I was initially worried that it would fall short of its predecessor, I think it’s safe to say that NieR: Automata ended up being even philosophically richer than Nier. To that end, it’s time that NieR: Automata met With a Terrible Fate.

Regular readers also know that my analytic method with respect to video games typically focuses on clarifying the precise and often surprising relations in which the players of video games stand to the stories of video games. In that regard, this paper is no exception: I want to convince you that you, the player, are involved in the story of NieR: Automata in a surprising and illuminating way. But, for the sake of full transparency, I’ll start by warning you that, as you might imagine, this work contains LIBERAL SPOILERS for NieR: Automata, Nier, and Drakengard (starting in the next paragraph!). If you’re at all familiar with Nier and/or NieR: Automata, then you know that the stories of these games deeply depend on facts that are only revealed quite late in the game; so, if you haven’t yet played through the games, I wouldn’t recommend reading this yet.

With that in mind, let me offer a roadmap of the paper. I frame this analysis as the attempt to answer a seemingly simple question: “Where are the humans in NieR: Automata?” Now, if you’ve only just started the game, you’d probably say, “They’re on the moon, obviously”; if instead you’ve played through the whole game, you’d probably say that this is an ill-posed question because, at the time of NieR: Automata’s events, humans are long-extinct—there are only machines lifeforms, androids, animals, plants, and pods. Fair enough; however, it’s undeniable that the presence of humanity is virtually ubiquitous in the world of the game. Machine lifeforms slowly recover human culture and become sentient; androids identify themselves as sentient even before machine lifeforms do; by the end of Ending E, even the simple pods that accompany androids are beginning to exhibit “human” traits like compassion and attachment. So when I ask where the humans are in the game, what I’m really asking is what the origin is of all these specifically human properties that the various organisms in the game eventually instantiate—especially the property of sentience, or self-awareness. You might think the answer is simply that these human properties originated in the humans that went extinct long ago in the game’s world; after all, the game mentions that the human characteristics of the androids are the result of their human creators.

It’s this second response that I want to challenge: I think that the player, rather than the extinct humans of the game’s world, are the source of the sentience that emerges in androids, machine lifeforms, and pods throughout the course of the game. I begin by clarifying the scope of my thesis, in an effort to show that, so far as I can see, my claims don’t threaten what one might call “canonical” interpretations of the game’s story. Then, I use an analysis of player-avatar relations to argue that the player is the origin of sentience and humanity in Nier and NieR: Automata. This, I think, is a fairly easy thesis to endorse. After this is established, I argue for the significantly more controversial thesis that the fictional world of NieR: Automata is actually nothing more than a data structure; that is to say, it is true within the fiction of the game that the world is just a computer simulation being manipulated by a real player. Finally, I conclude by explaining why these theses matter for understanding NieR: Automata: the game’s metaphysics, I argue, establishes unexpected, fictional, ethical mandates that bind the player as they engage the game.

1. Preliminaries

In the past—especially in my initial, four-year-old work on Nier—I have sometimes failed to be sufficiently clear about the scope and level on which my analyses of video games have applied, which has led to some confusion about how my work ought to be evaluated in comparison with competing analyses or interpretations of the games in question. This is an especially acute danger when discussing NieR: Automata because there are myriad possible ways in which one could interpret “the game.” To name a few: are you analyzing the game as a stand-alone narrative, or as the third installment in the three-part narrative sequence of Drakengard, Nier, and Nier: Automata? Are you analyzing the game as a set of equally possible narratives with 26 different endings, or are you analyzing the single narrative and ending within the game that you take to constitute the “true” story? And so on. There’s no obvious reason to endorse any one such analytical approach over the others; what matters is being clear on precisely what your analytical approach is, so as to avoid having it confused with other approaches in the vicinity. By clarifying my own approach in this way, I aim to show why the claims it generates about the game are fairly compatible with a wide array of other plausible analyses of the game.

To see what my project is up to, we need to distinguish between what we might think of as two “levels” of analysis. Call the first level of analysis ‘Narrative-Event Analysis’ (or ‘NE Analysis’), and define it as follows.

NE Analysis: The analysis or interpretation of the various events of a narrative, and of how those events are interrelated.

This is what most video game theorists and art critics are up to: they take the events of a given story and try to make meaning out of those events in a particular way. When YouTube personalities analyze and explain the lore of the Dark Souls games, they’re engaged in NE Analysis; when you try to sort out where the story of The Legend of Zelda: Breath of the Wild fits into the larger set of Zelda timelines, you’re engaged in NE Analysis; when you’re explaining how on earth the Shadowlord of Nier logically fits into Ending E of Drakengard, you’re engaged in NE Analysis. This is the time-honored tradition of taking the various events of a story and seeing how they best cohere with one another to form one meaningful, comprehensible work of art.

The question of where Breath of the Wild fits into Zelda timelines is a question for NE Analysis.

Now consider an altogether different level of analysis. Call it ‘Narrative-Grounding Analysis’ (‘NG Analysis’), and define it as follows.

NG Analysis: The analysis or interpretation of the metaphysical foundation in virtue of which the various events of a narrative obtain, and how that metaphysical foundation relates to the events that it actualizes.

Put this way, NG Analysis might sound unfamiliar, but (1) I think we often ask ourselves NG-Analysis questions about stories, and (2) this is the exact sort of analysis I’ve been applying to video games for several years now on this site. When you ask yourself what makes the constant regeneration of the Chosen Undead in Dark Souls possible, you are engaging in NG Analysis; when someone explains what it is about the world of Zelda that makes time travel possible, they are engaging in NG Analysis; when I am analyzing what makes it possible for machine and Replicants to become sentient in the world of Nier and NieR: Automata, I am engaging in NG Analysis. This is also the sort of analysis I was undertaking when I claimed that: the player is the source of moral reality in Majora’s Mask; the narrative of BioShock Infinite is a universal collapse event caused by the player; and the entirety of Bloodborne is a dream.

My analysis claiming that all of Bloodborne is a dream is an example of NG Analysis.

What’s crucial to notice about these two levels of analysis is that neither level of analysis, at least in any obvious way, makes claims about the other level of analysis. Insofar as this is true, the video game theorist is licensed to engage in NE Analysis about a game without worrying about what the right NG Analysis of the game is, and vice versa. For instance, suppose that you’re trying to decide between two competing theories of how Link is able to travel through time in the Zelda games: according to one theory, this is made possible by the will of the Goddess Hylia, and according to the other theory, it is made possible because Link is some special kind of entity that can freely move through time in a way that ordinary Hylians can’t.[1] These two theories are trying to explain the same narrative events, and they can’t both be right; that means that we have to choose between them if we want to have a correct understanding of the game’s story (assuming that one of these two theories is correct, as opposed to both of them being incorrect). However, neither of these theories is going to have anything to say about how time travel in the game works: they’re only going to say what makes time travel in the game possible. So consider a question like this: in The Legend of Zelda: Ocarina of Time, how do the actions of Adult Link affect the events that Young Link experiences seven years earlier? (Think of an example like the Spirit Temple, where Adult Link and Young Link are apparently “interacting” with each other across time.) This is a question about how the events of the game’s narrative relate to one another—which is to say, it’s a question for NE Analysis to resolve. Whether time travel is made possible by Hylia or by Link’s constitution isn’t going to have any direct bearing on how the events concerning Adult Link relate to the events concerning Young Link; to answer this question, we instead need an NE Analysis specific to those events (e.g., “time travel works by allowing Adult Link to rewrite events of the past”).

I’ve only aimed to show here that NE Analysis and NG analysis are indeed separate levels of analysis: when you’re engaged in NG analysis, you’re analyzing something fundamentally different than what you analyze in NE Analysis: in NG Analysis you analyze the metaphysical foundation of events in a story, whereas in NE Analysis you analyze the events themselves. Why does this matter as preliminaries to my analysis of NieR: Automata? Well, there are many interesting questions about how the events of NieR: Automata relate to each other, to the events of Nier, and to the events of Drakengard. To name a few potential questions of this sort: where did the aliens in NieR: Automata come from? How, if at all, does White Chlorination Syndrome relate to the Black Scrawl? What effects does 2B’s consciousness have on A2, after A2 kills 2B? It should be clear by now, I hope, that these are all questions that NE Analysis is tasked with answering. And it bears mentioning that, typically, when people talk about “canon interpretations” of a story—roughly, the “correct” interpretation of a story’s events, often deemed correct simply because the author says it’s correct—are interpretations that similarly belong to NE Analysis. Canonical interpretations of narratives rarely have anything substantive to say about the metaphysical grounds of a narrative’s events.

Recall that what I’m interested in pursuing in this paper is a matter of NG Analysis: namely, the question of what it is in virtue of which apparently human properties obtain in the world of NieR: Automata. Given what I’ve said, it follows that my arguments in this paper won’t directly bear on “canon” issues of how to properly interpret the events of the game on the level of NE Analysis. Put differently: if you already have some favorite theory about how the events of the game are interrelated, my work here doesn’t necessarily pose a threat to that theory.[2] If, on the other hand, you have a favorite theory about the metaphysical grounds of the narrative’s events (and I frankly haven’t seen any such theories out there yet), then my theory is a competitor to that theory, and you’ll have to see which seems more plausible to you upon reflection.

2. Becoming Human

I’ve established the level on which I intend my analysis to operate: in this paper, we’re exploring the metaphysical foundations of NieR: Automata. In this section, I offer an argument to the conclusion that the humanity of the player is what metaphysically grounds an entity’s “becoming sentient” (i.e. being self-aware and instantiating human properties) in NieR: Automata and NieR. I’ll make this argument by first focusing on the nature of maso and Project Gestalt, and then by extending it to the nature of machine lifeforms, androids, and pods. This will directly lead us to the argument of the next section—that the world of NieR: Automata is a data structure.

The Giant/Queen-beast, from which the maso originates.

‘Maso’ is a substance that originated in the ending of Drakengard that served as the impetus for Nier and (subsequently) NieR: Automata. Very roughly, in Ending E of Drakengard, the protagonists confront and destroy an otherworldly “Giant” (also known as the Queen-beast) that subsequently releases maso, an otherworldly, “multidimensional” particle. The maso induces ‘White Chlorination Syndrome’ (‘WCS), a disease that forces a choice on humans: either form a pact to become the servant of a god from another world, or perish by turning into a statue made of salt. Humans are able to avoid this disease by using maso to develop “multidimensional technology” that separates their souls from their bodies until a time at which WCS has died off, at which point humans would reunite their souls with their bodies (this plan of defense against the disease was called ‘Project Gestalt’ and was central to the plot of Nier). However, the soulless bodies preserved for humans—entities called ‘Replicants’—ended up developing “a sense of self” (i.e. sentience). This advent of self-awareness in Replicants led to a corresponding loss of sentience in the separated souls of humans, called ‘Gestalts’—this loss of sentience was known as ‘relapsing’ and caused the Gestalts to turn into aggressive, animalistic creatures (known to Replicants as ‘shades’).

Replicant Nier and his daughter, Replicant Yonah.

The protagonist and avatar of Nier—technically named by the player, but called ‘Nier’ for convenience—is the Replicant corresponding to “the Original” Gestalt, someone whose data was central to the development and sustainability of Project Gestalt. The story of Nier (again, very roughly) follows Nier’s struggle to save his daughter—also a Replicant—from “the Shadowlord”—an entity that Nier sees as an enemy, but who is actually his own Gestalt (“the Original”) trying to reclaim his daughter’s Replicant. When Nier kills his Gestalt, he effectively derails Project Gestalt, which leads to the eventual extinction of humanity.

Replicant Nier killing the Shadowlord, his own Gestalt.

That’s a far-too-condensed reconstruction of what I take to be the key and relatively uncontroversial elements of the narrative than begins with Ending E of Drakengard and proceeds through the conclusion of Nier. The key points to notice for my purposes are: (1) maso is a multidimensional substance that binds humans to gods from other worlds, (2) the avatar of Nier is the Replicant that corresponds to Project Gestalt’s Original, and (3) sentience is more-or-less zero sum between a given Gestalt-Replicant pair: if the Replicant gains it, then the Gestalt starts down the road to losing it (and metaphysically, this seems reasonable: if a Gestalt-Replicant pair is supposed to be just one conscious entity, split into body and soul, presumably it would be able to sustain just one consciousness).

I think that, merely from the fairly uncontroversial facts I’ve highlighted about the story, a surprising but intuitive thesis about the source of sentience in Nier presents itself: namely, the player of the game is the source of sentience in Nier (the avatar) and other Replicants. Notice again that maso, according to Drakengard, is a substance that straddles dimensions and binds people to the gods of other worlds. It seems appropriate and explanatorily powerful to say that, as an avatar, Nier—again, the Replicant corresponding to the Original—is importantly bound to the player of the game, an extra-dimensional entity that determines Nier’s actions and choices throughout the game’s story. Given that we know maso renders humans the servants of gods, and Gestalt technology is derived from maso, we can explain Nier’s sentience by saying that he inherits it from the extra-dimensional entity to which he is bound: a sentient, human player.[3]

A potential objection: what about the fact that other Replicants gain sentience in Nier? These other Replicants are clearly not avatars, and so it can’t be the case that sentience in Nier is categorically derived from the player’s sentience.

My response: recall that Nier’s Gestalt has the special status of being “the Original” in Project Gestalt. To my knowledge, this status is never given a full and precise explanation, except to say that this Gestalt uniquely makes Project Gestalt possible, and that Project Gestalt is irreparably derailed when Nier kills his Gestalt. Given this special priority that Nier and his Gestalt have in the efficacy and progress of Project Gestalt, it strikes me as plausible to suppose that Nier’s sentience would play a causally decisive role in the emergent sentience of other Replicants. That is to say, Nier’s status as the Original’s Replicant makes it the case that his acquired sentience—which, again, he inherits from the player—subsequently induces sentience in other Replicants. So, even while the other Replicants don’t directly inherit sentience from the player, their sentience is still derived from the player, given the causally decisive role of Nier’s sentience.

Another potential objection: the player of a video game is a real person, not a fictional entity. So they simply couldn’t be part of the game’s narrative: real things can’t causally interact with fictional things in that way (e.g., I, a real person, can’t stop Tom Sawyer from painting a fence in the fiction of Mark Twain).

My reply: no doubt this is true, but fictions give real people fictional roles to play all the time. Think, for example, of second-person novels, which put the reader in the fictional role of whomever the narrative is addressing. And even though it might seem unintuitive or metaphysically unhappy to say that the player, from “another dimension,” is influencing the actions of Nier in his dimension, recall that the narrative already allows for this kind of influence even prior to my interpretation: again, WCS induces pacts between humans and gods from other worlds. So my analysis is metaphysically of a piece with the rest of Nier’s narrative.

I think my analysis is illuminating here because it links the rise of sentience in Replicants to Project Gestalt’s origins in maso; it also gives explanatory and metaphysical force to Nier’s status as an avatar (that is to say, Nier is an avatar because his maso-derived connection to the player allows the player to determine his actions). The analysis also strikes me as better than saying that Replicants “simply became sentient,” because, by linking sentience in Nier to the human playing the game, we are able to identify the sentience of Replicants as derived from a real source of sentience (i.e. an actual human).

So much for the good reasons to accept the player as the ground of sentience in Nier; the question now is, can we extend this metaphysical account of sentience to the world of NieR: Automata? Yes, but admittedly we can’t do it directly: given that YoRHa androids, machine lifeforms, and pods aren’t the direct products of Project Gestalt, we can’t simply say that maso technology allows the player to influence them all, and leave it at that. However, I think we can make an argument by inference-to-the-best-explanation that gives us good reason to believe that the metaphysical account we’ve given in Nier does extend to NieR: Automata; we’ll just have to invoke some data about machine cores and the player’s metaphysical relation to the game’s world.

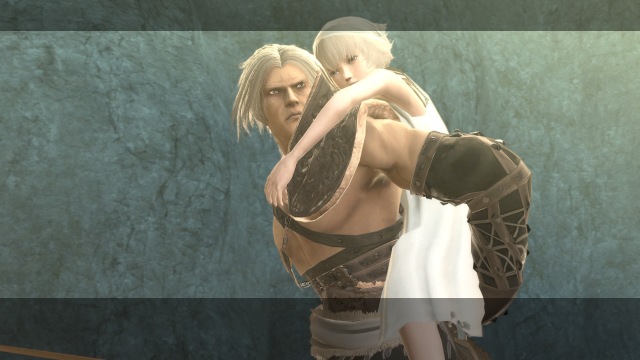

2B and 9S with their black boxes, fashioned from recycles machine cores.

First, machine cores. These are the central components of the machine lifeforms that the YoRHa androids in NieR: Automata battle without end; it’s also revealed late in the game that these cores are also recycled and used as “black boxes” to power YoRHa androids. There are two crucial upshots about these machine cores. The first upshot is that information archives provided in the game reveal that the cores are responsible for the structure of the consciousness of whatever entity they’re powering; we know this because the archives say that, in virtue of both machine lifeforms and YoRHa androids using machine cores, “it could be said that the consciousnesses of YoRHa units and machine lifeforms share the same structure.” The second upshot is that machine cores are well-suited to represent consciousness in entities that are designed to ultimately be destroyed. We know this because archives within the game report that “black boxes were installed [in androids] after determining that it would be inhumane to install standard AI in androids that are ultimately destined for disposal.” Given that YoRHa androids are apparently sentient, and their machine-core powered black box is responsible for the structure of the androids’ consciousness, it follows that we can understand what grounds sentience and humanity in NieR: Automata by understanding how machine cores are conducive to sentience and humanity.

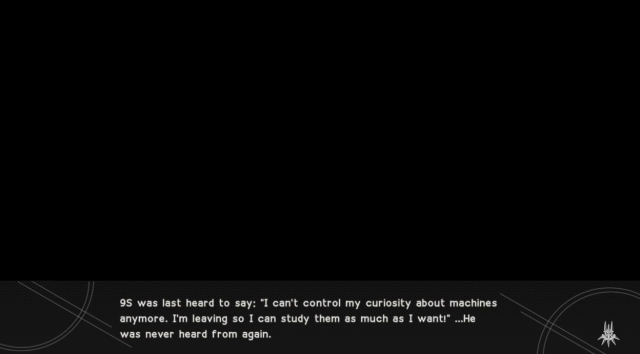

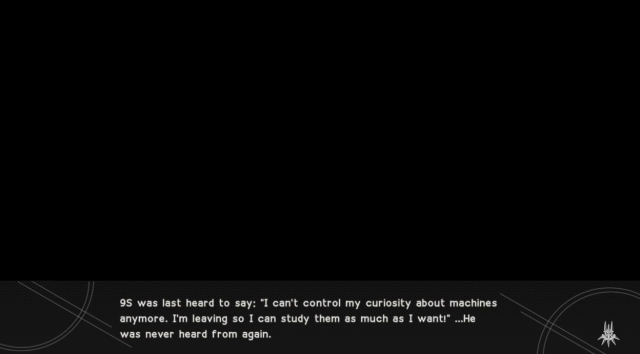

Now, consider the question of what sort of metaphysical relation the player stands in to the world of Nier: Automata. What, in other words, does the player’s access to the game’s world amount to, within the fiction of the game? It seems to me that players have a fairly direct form of access to and influence on the game’s world. The game’s manifold endings exemplify this: the player’s choices are often able to determine not only the actions of their avatars, but also the desires and motivations of their avatars. For example, if the player directs 9S away from his initial mission helping 2B, the game ends with text saying: “9S was last heard to say: ‘I can’t control my curiosity about machines anymore. I’m leaving so I can study them as much as I want!’ He was never heard from again” (this is Ending G). Similarly, if the player has 2B kill the machines that putatively want to establish a peace treaty with Pascal’s village, the game ends with text saying: “In a sudden fit of temper, 2B wiped out the machine lifeforms, and no peace was born that day” (this is Ending J). This tight connection between the player’s choices and the mental states attributed by the game to the avatar androids suggests that the player does have some measure of influence over not just the androids’ actions, but over their psychology as well. Even more to the point, players can alter the very constitution of their avatar androids by changing the plug-in chips that determine their various characteristics, even going so far as to remove their OS chip if they wish. In other words, players seem to have deep and pervasive control over myriad constitutive features of their avatar androids’ identities.

Ending G, in which the player directs 9S away from his mission supporting 2B.

In various ways, the activities of the pods that accompany avatar androids 9S, 2B, and A2 also suggest that the player enjoys a direct presence within the fiction of the game. In particular, notice that when the player uses her controls to change the “camera’s” perspective on the game, she doesn’t actually move some disembodied, third-person viewpoint: instead, as she moves the camera, the pod following her avatar moves accordingly, in such a way that the pod is always facing straight ahead from the player’s perspective. This establishes a sense in which the pod is directly connecting the player to the world of the game, thereby allowing the player to really be present within the world of the fiction rather than merely viewing the fiction from an external position in the real world.

Notice that even as 2B faces orthogonally to the camera view, her pod matches the direction of the camera view.

Now we have on the table all the considerations needed to argue to the conclusion that the player is the metaphysical source of sentience in NieR: Automata. First, the consciousness manifested in YoRHa androids and machine lifeforms isn’t standard AI; given the context, which says that standard AI would have been inhumane for disposable androids, we can safely infer that the consciousness made possible by machine cores is somehow “less authentic,” “less genuine,” or less “sui generis” than “standard AI,” where “standard AI” probably means genuinely, intrinsically self-conscious AI of the sort that we still have yet to achieve in the real world. We also know that the player of NieR: Automata has apparently direct access to the game’s fictional world: the pods act as a direct means of access within the fiction by which the player can manipulate the world, and the player’s choices are reflected in the actions, psychology, and basic makeup of the androids; further, the iterative structure of androids’ existence—constantly dying, being re-instantiated, and recovering their old data—closely mirrors the player’s actions of guiding them through the game, failing, reconstituting the android, and recovering their data. Now, returning to my analysis of Nier and assuming that it’s correct, we also know that technology exists (namely, the maso technology of Project Gestalt) that allows humans from other dimensions to impart their sentience to otherwise non-sentient entities. Given that such technology already existed, I think we can infer that the best explanation of the sentience that emerges in androids and machine lifeforms is that, through the construction of black boxes from machine cores, androids were able to induce the same sort of relationship between android and player that previously existed between Nier and player. And, just as the player’s sentience proliferated throughout Replicants in Nier, so too was the player’s sentience diffused in NieR: Automata amongst beings with the relevant kind of technology—that is, beings with machine cores. This explains why both YoRHa androids and machine lifeforms are susceptible to becoming sentient.

What about the pods? It’s clear enough by the end of NieR: Automata’s Ending E that the pods are also at least on their way to sentience, if not fully sentient; yet there’s no evidence (so far as I know) that they’re also powered by machine cores. So how can my account explain their sentience, since they presumably wouldn’t be connected to the player’s sentience via machine cores? I think the answer here is straightforward. Recall that, on my account, pods act as conduits that directly connect the player to the world of the game. Given this direct relationship between the player and the pods, there isn’t any need to appeal to machine cores in explaining the pods’ emergent sentience: we can instead say that, since the pods already possess basic operational intelligence and they’re being used to directly transmit the player’s agency to the game’s world, it’s only natural that the pods could somehow “pick up on” or learn to emulate the consciousness of the player to whom they are intimately connected. This response is admittedly somewhat more vague than the analyses of sentience in androids and machine lifeforms, but this vagueness is a direct result of there being proportionately less information available about the structure and ontology of pods. Thus, I don’t think the additional vagueness in my account should speak against my analysis per se; we should instead just be disappointed that there isn’t more documentation about pods within the world of the game.

If my arguments in this section are right, then the sentience that emerges in Replicants, YoRHa androids, machine lifeforms, and pods are all deeply related in a surprising and informative way: all of them are derived, directly or indirectly, from the metaphysically foundational sentience of the video games’ player. As I emphasized at the outset, this Narrative-Grounds Analysis needn’t settle the most pressing questions of how to interpret the actual events of the games: my analysis, for example, needn’t bear on question of who the aliens are who brought the machine lifeforms to Earth, nor need it bear on the question of which of NieR: Automata’s endings is the “true” ending (if that’s even an intelligible question to begin with). What the analysis instead succeeds in doing is establishing a crucial link between the player of the Nier games and the content of those games: the player doesn’t just determine what the avatars do in those games—the player actually enables entities in those games to become sentient within the fiction, in a metaphysically robust sense.

3. Playing a Fictional Video Game

I think that the above analyses is the best account of the metaphysical foundation of sentience across both Nier and NieR: Automata; however, I think that NieR: Automata suggests a further, much more radical interpretation of the fiction’s metaphysics, one which invites us to reinterpret the precise significance of the player’s sentience and agency on the game’s world. I want to emphasize, however, that this further interpretation is both (1) much more speculative than the above analysis and (2) theoretically separable from the above analysis: that is to say, you can consistently endorse my above analysis while also rejecting the argument presented in this section. All the same, I would be remiss not to mention this more radical interpretation of the game’s world, because there is at least some evidence for it within the game and it allows us to conceptualize the game in an extremely unexpected, unorthodox, and challenging way.

The central thrust of this more controversial interpretation is that it is true within the fiction of Drakengard, Nier, and NieR: Automata that the world is nothing more than a data structure being manipulated by a human from the outside. In other words, put roughly, this interpretation claims that it’s true within the fiction of the video game that the world is nothing more than a video game. Just to be clear about how radical this thought is: our typical assumption with the fictional worlds of video games is that these worlds, within the context of the fiction, are real. For example, it doesn’t seem to be true within the fiction of The Legend of Zelda that the world is an interactive data structure; instead, it seems true within the fiction that there is a real world called Hyrule, in which Link really performs certain actions, quests, etc. The thesis I’m exploring in this section is claiming that it isn’t true within the fiction of Nier games that there is a “real world” in this sense: instead, within the context of the fiction, there is a computer-generated world with which a human player interacts.[4]

I see two central data in NieR: Automata that support the thesis that the fiction of the Nier games represents a pure data structure: the first datum is information about the overarching “network” that governs machine lifeforms in the game, and the second datum is the way in which the game’s content is generally represented to the player. I consider each datum in turn.

After the player completes Ending E of the game (assuming the player doesn’t delete her data—more on that in the next section), a “Machine Research Report” is added to her information archive. The report, written by Information Analysis Officer Jackass, details the network that governs the machine lifeforms, explaining how it was created and how it evolved into a “meta-network,” codenamed ‘N2’ (typically represented within the game as two Red Girls). It offers the following information about the machines, their network, and their meta-network.

A representation of N2 as one of the Red Girls.

“Machine lifeforms are weapons created by the aliens. The only command given for their behavior was to ‘defeat the enemy’. However, it appears that their capacity for growth and evolution went too far, and they eventually turned on and killed their creators.

“At this point, machine lifeforms recognized that the goal of ‘defeating the enemy’ actually REQUIRED an enemy. In order to maintain this singular objective, they reached the contradictory conclusion that their current enemies—the androids—could not be annihilated completely, lest they no longer have an enemy to defeat.

“In order to resolve this inherent contradiction, the machine lifeforms began to intentionally cause deficiencies in their network, diversifying the vectors of evolution for all machines. This is the cause behind some of the more ‘special’ machine lifeforms, such as Pascal and the Forest King.

“Meanwhile, the deficient network began repeating a process of self-repair while incorporating surrounding information, until it finally reached a fixed state as a new form of network. Traces of information regarding human memories from the quantum server of the old model were discovered, indicating that it had integrated them during the final stages of its growth process. Said server contained a record of the discarded ‘Project Gestalt’, as well as information on the human who was the first successful example of the Gestalt process.

“Having acquired information regarding humanity, the network’s structure changed once more, becoming what might better be called a meta network (or a ‘concept’, to borrow the words of the machines). This led directly to the formation of the ego we identify as N2.

“…So then! To sum up: For hundreds of years, we’ve been fighting a network of machines with the ghost of humanity at its core. We’ve been living in a stupid ****ing world where we fight an endless war that we COULDN’T POSSIBLY LOSE, all for the sake of some Council of Humanity on the moon that doesn’t even exist.”

The obvious way to read this is to take it at face value: aliens created machines that killed them; these machines fought the androids; the machines ultimately learned about the real events of Project Gestalt and evolved, etc. But there’s another potential interpretation of this information available to us: suppose that aliens created a vast data structure, with “machine lifeform” programs that were governed by an overarching network with some sort of artificial intelligence. The network was designed with the purpose of “defeating enemies”; after generating and killing virtual representations of their creators, the only network-independent entities it knew, the network had to find a further, more sustainable way to fulfill its purpose. To this end, the network generated a virtual history of humanity and Project Gestalt within the data structure, along with the subsequent androids whose express purpose—protecting humanity—would necessarily put them into conflict with the machine lifeforms, thereby ensuring that the network would always be able to strive towards its purpose of defeating enemies. On such an interpretation, the worlds of Drakengard, Nier, and NieR: Automata are just the data structure generated by the machine’s network and meta-network: the network is the cause of those worlds, rather than just another element contained within those worlds.

Of course, the network and its machines couldn’t fulfill its purpose of defeating enemies simply by programming other entities to attack it: this would effectively constitute a fight against oneself, which is no real fight as such. So, the natural solution was to enable some external agency to control the androids and direct them to fight against the machines—and this external agency is what the player provides. The interesting, unintended consequence of the player’s introduction to this data structure—returning to the themes of the last section—is that the player’s sentience ends up “infecting” otherwise non-sentient computer programs with genuine sentience, which turns what was once a mere data structure with quasi-artificial intelligence into a virtual world that supports sentient virtual beings.

To reiterate, this interpretation is absolutely wild. Nevertheless, I think there’s enough evidence for it within the game to at least consider it as a seriously possible interpretation. Consider as further information about the machine network the monolithic Tower that emerges after the death of 2B—the Tower in which N2 resides, and in which A2 and 9S face each other. The purpose of this Tower is expressed by N2 directly to 9S, as he is losing consciousness during Ending D. It’s worth quoting what 9S learns from N2 about the Tower.

The Tower in NieR: Automata.

“This tower is a colossal cannon built to destroy the human server. Destroy the server… and rob the androids of their very foundation. That was the plan devised by [the Red Girls—i.e. N2].

“But they changed their mind. They saw us androids. They saw Adam. And Eve. They saw how we lived, considered the meaning of existence, and came to a different conclusion.

“This tower doesn’t fire artillery. It fires an ark. An ark containing memories of the foolish machine lifeforms. An ark that sends those memories to a new world.

“Perhaps they’ll never reach that world. Perhaps they’ll wander an empty sky for eternity. It’s all the same to the girls. For them, time is without end.”

In a similar way to the Machine Research Report above, we could interpret this in the obvious and literal way, but it seems like there’s another reading available that resonates with the radical interpretation we’re presently considering. On this alternative reading, the Tower is something like the central hub that generates the virtual world. Its libraries of “information” with various port numbers are actually libraries of functions to call to instantiate and run all the various virtual entities that constitute the network’s world; the network planned to fulfill its purpose (“defeat the enemy”) by annihilating its enemies (this is the discussion of the Tower as a “colossal cannon”), until the network realized it could better fulfill its purpose in perpetuity by using the input of a human to perpetually re-instantiate the network’s virtual world and enemies over and over again. Remember the fact that NieR: Automata has 26 endings? On the wild interpretation we’re currently considering, the multitude of endings is explained by being the networks’ way of prompting players to “send the memories” of the virtual world’s entities in the game to “a new world”—that is, a new possible outcome of the game. By constantly replaying and exploring all of the possibilities of the game, the player allows the network and its machines to infinitely strive to fulfill their purpose of defeating the enemy. In this way, the very structure of the game reinforces the idea that the machine network generated a virtual world for the player to engage in order to fulfill the network’s purpose of “defeating the enemy.”

As I mentioned earlier, the way in which the game presents its fictional content to the player further reinforces this wild theory that its world is just a data structure. The loading screens in the game present presumably in-game data about the various vitals and systems pertaining to whichever android is serving as the player’s avatar; pods are able to use the loading screen as a communication interface, further implying its in-game status as some sort of abstract data structure; the entire world as presented to the player will sometimes appear to “glitch” when all is not right with their android’s sensory systems, even though the world is not presented to the player through the android’s visual field; and the omnipresence of the virtual “data space” in which 9S can hack—appearing everywhere from in machine lifeforms, to the minds of androids, to locks, to seals on the Tower, further suggests that the world could foundationally be just a virtual data structure. Taken individually, each of these data could be furnished with an alternative explanation; yet taken holistically, together with the previous considerations about the origin of the network, it seems at least possible to seriously consider that the world of the game is itself nothing more than a video game generated by the machine network.

This analysis of the game’s metaphysics is of course controversial, and I’m not at all as confident in it as I am in the previous section’s conclusions about the player as the metaphysical basis for sentience in the fiction. Yet the analysis has distinctive merits. NieR: Automata is a game that is obsessed with the formal elements of video games: machine lifeforms are designed with the purpose of defeating enemies (in other words, they are meant to be enemies to the avatar), and avatars—the YoRHa androids—are designed with the purpose of defending humanity (in other words, they serve humanity while also being directed by the inputs of an actual human player). This metaphysical analysis explains these parallels between narrative form and content by saying that the game’s fictional world just is the virtual world of a video game, and its constituent characters are designed accordingly. It also captures the narrative significance of the wide array of endings that the game has: whereas we would otherwise presumably have to admit that there’s no intrinsic narrative reason why the game has so many possible endings (we might instead simply say something like “the developers thought it would be more entertaining,” which doesn’t seem as satisfying an explanation), we can instead say on this account that the machine network constructed the world in this way in order to keep the player coming back and thereby sustaining the network’s purpose. So, although this section is not intended as a staunch defense of this interpretation of the game’s world, it is an invitation to take seriously the idea that NieR: Automata’s universe might really be what it most immediately appears to be: a video game.

4. The Ethics of Being a Sentience-Source

Suppose you find my above arguments convincing. You might still feel the urge to ask: “So what?” After all, I was very clear at the outset of this paper that analyses of a narrative’s metaphysical foundation needn’t have any direct bearing on how we interpret the events of that narrative. If that’s true, then why should we even bother with NG Analysis?

Well, in the first place, I should hope it’s apparent by now that NG Analysis does have implications for how we understand a video game and its fiction, even if it doesn’t directly bear on events in the game. I imagine, for instance, that we might feel different playing through NieR: Automata and thinking that its fictional world is fictionally just a data structure, versus playing through the same game and thinking its fictional world should be understood as fictionally real in the same way that our actual world is understood as real. Or consider how different the series of games would be if sentience arose intrinsically from Replicants, androids, and machine lifeforms, rather than arising derivatively from the sentience of the player. On that alternative understanding of the games’ metaphysics, the games would be presenting a world in which sentience can naturally arise out of programmed machines. In contrast, that isn’t the case on my interpretation: because the sentience of all these entities is ultimately grounded in the player’s sentience, machines only end up being sentient because the sentience of a naturally sentient lifeform (the human player) is shared with the machine. I take it that a fictional world in which intrinsically sentient machines are possible is crucially different from a fictional world in which such machines are not possible.

But suppose now for the sake of argument that the above considerations don’t move you. I want to close by considering one other way in which the analysis of NieR: Automata’s metaphysics deeply matters: namely, it determines the ethical commitments that the player has within the game to androids, pods, machine lifeforms, and other players.

If a given entity is sentient, then we typically think that the entity has moral rights—that is, there are morally permissible and morally impermissible ways for a moral agent (like a human) to treat that entity. Because androids, machine lifeforms, and (eventually) pods are sentient within the fiction of NieR: Automata, that means that, fictionally, there are right and wrong ways to treat them. These entities of course don’t have real moral rights because it isn’t the case that the programs representing them in the video game are literally capable of robust artificial intelligence, but when we engage in the fiction, it stand to reason that we must treat them as fictional entities with moral rights because of their fictional sentience. But notice that, based on your preferred metaphysics of sentience in the game, the sense in which these entities have moral rights will differ accordingly. If you think that these entities naturally became sentient independently of the player’s sentience, then they will have fictional moral rights regardless of whether the player interacts with the fictional world or not. On the other hand, if you agree with me that the sentience of these entities fundamentally depends on the sentience of the player, then it follows that these entities only have moral rights so long as the player interacts with the game’s world and thereby renders them sentient.

Why should these ethical considerations be any more compelling a case for the value of NG Analysis than the earlier considerations were? Because, it turns out, these ethical considerations will determine what choice you should make at a crucial juncture in the game’s narrative.

In Ending E of NieR: Automata, Project YoRHa enters its final phase: destruction of all androids and deletion of all data. Pods 153 and 042, together with the player, decide to recover the data of 9S, 2B, and A2 (the avatar androids)—thereby preserving the player’s data and allowing the player to continue exploring the game’s world and possibilities. In order to recover the androids’ data, however, the player must complete an exceedingly challenging mini-game in which she pilots a digital ship that destroys all the names in the game’s credits, all while avoiding myriad projectiles that the names are firing at the ship.

The credits-based mini-game in Ending E. Getting hit by three projectiles total is fatal.

It’s very difficult to complete this mission alone; however, after failing several times (assuming the player is connected to the online network of other players), the player will receive a “rescue offer” from other players. If the player accepts, then the ships of other players will join the player’s ship, making the mission extremely easy; however, every time a projectile connects with the pack of ships, another player’s data (not the original player’s) is lost. Once the player completes the mission, the androids’ data is successfully restored, and the pods offer the player an option: if she so chooses, the player can sacrifice her own data in order to help another player reach this ending, just as she was (presumably) helped in reaching the ending. At the price of the save data and records you have accumulated in the game, you can help another player—a perfect stranger.[5] Here’s the crucial ethical choice: do you agree to help the other player or not?

If you have the view that androids, and machine lifeforms, and pods are fictionally sentient independent of you, the player, then, within the context of the fiction, these entities have moral rights against you no matter what. Given that choosing to delete your save data plausibly entails more-or-less “erasing” these entities, it stands to reason that such a view would forbid you from deleting your save data: to do so would be to help a stranger at the cost of annihilating countless sentient beings. In contrast, if you have the view that the fictional sentience of these entities fundamentally depends on the sentience of the player, then it follows that, were you to withdraw yourself from that fiction—for instance, by deleting your save data—then these entities wouldn’t be fictionally sentient anymore, and thus wouldn’t have fictional moral rights against you. On such a view, helping a stranger would not transgress against the moral rights of anyone, since, upon deleting your save data, the androids, machine lifeforms, and pods would lose their foundational connection to your sentience, from which their own sentience derived. Since, other things being equal, you probably ought to help the stranger since you were probably helped by strangers in successfully reaching Ending E, it follows on this view that you ought to delete your save data. So your view of the metaphysics of sentience in Nier: Automata could end up determining what you morally ought to do within the fiction when presented with this choice at the end of Ending E. If you think that your choices as a player within a video game matter at all, then this means you can’t afford to overlook the metaphysical foundation of NieR: Automata.

Conclusion

Nier: Automata, as I said at the outset, is a philosophically rich game across a wide variety of dimensions. I’ve only aimed in this paper to analyze the most foundational of those dimensions: the metaphysics of the game’s fiction. But those metaphysics, we’ve seen, are quite illuminating with respect to the rest of the game: they afford the player a central role as the wellspring of sentience in the game’s world, and they suggest new ways of grounding the self-consciously “video-game” aspects of the game’s narrative. These metaphysics may well be part of why the game’s exploration of sentience and the meaning of being human is so compelling: even as machines and androids wrestle with these concepts, the sentience they are trying to understand is ultimately your very own sentience; the humanity they want to know is your humanity. The human in NieR: Automata, therefore, is the one behind the controller.

[1] Obviously, these are both toy examples, and it isn’t obvious that either of them is the correct account of Link’s time-traveling abilities.

[2] I say my work “doesn’t necessarily” pose a threat to that theory because there surely may be specific cases and ways in which an account of a narrative’s fictional grounds might restrict the set of possible interpretations of that narrative’s events. My point is simply that there is no a priori, categorical entailment relation between theories of a narrative’s fictional grounds (NG Analyses) and theories of the meaning of that narrative’s events (NE Analyses).

[3] In precisely what sense does Nier “serve” the player? An easy response would be to say that the player “controls” Nier in just the way that the literal control mechanics of the game suggest. If you’ve read my recent work on the foundations of video game storytelling, then you know I don’t think it’s right to say that players control avatars in that way; however, on my view, the explanation would simply be that the player already occupies a fictional role in the grounding of the game’s narrative, and Nier simply embellishes that fictional role by identifying it as a human, extra-dimensional entity controlling Nier. All of which is to say: my preferred view of video game metaphysics supports the interpretation of Nier that I offer here, but one needn’t subscribe to my broader video-game metaphysics in order to endorse this interpretation of Nier.

[4] While the interpretation I’m considering here is radical, it’s not without precedent: my most recent work on Xenoblade Chronicles defends the view that its universe (or, at least, the main universe within the game) is best understood to fictionally be a computer-generated world with external input from a player.

[5] There are of course ways to avoid the hard choice here by, for example, backing up your save data on an external source that the game can’t delete. I’m ignoring such methods on the grounds that they are illegitimate responses to the choice within the context of the fiction.